Local Search¶

Background¶

The goal for the Local Search algorithm is to start with a good hyperparameter

configuration and test if it can be improved. The starting configuration could

have been obtained through one of the other algorithms or from hand-tuning. The

algorithm starts by evaluating the seed_configuration. It then perturbs one

parameter at a time. If a new configuration achieves a better objective value

than the seed then the new configuration is made the new seed.

Perturbations are applied as multiplication by a factor in the case of

Continuous or Discrete variables. The default values are 0.8 and

1.2. These can be modified via the perturbation_factors argument. In the

case of Ordinal variables, the parameter is shifted one up or down in the

provided values. For Choice variables, another choice is randomly sampled.

Due to the fact that the Local Search algorithm is meant to fine-tune a

hyperparameter configuration, it also has an option to repeat trials. The

repeat_trials argument takes an integer that indicates how often a specific

hyperparameter configuration should be repeated. Since performance differences

caused by local changes may be small, this can help to establish significance.

-

class

sherpa.algorithms.LocalSearch(seed_configuration, perturbation_factors=(0.8, 1.2), repeat_trials=1)[source] Local Search Algorithm.

This algorithm expects to start with a very good hyperparameter configuration. It changes one hyperparameter at a time to see if better results can be obtained.

Parameters: - seed_configuration (dict) – hyperparameter configuration to start with.

- perturbation_factors (Union[tuple,list]) – continuous parameters will be multiplied by these.

- repeat_trials (int) – number of times that identical configurations are repeated to test for random fluctuations.

Example¶

In this example we will work with the MNIST fully connected neural network from the Bayesian Optimization tutorial. We had tuned initial learning rate, learning rate decay, momentum, and dropout rate. The top parameter configuration we obtained was:

- initial learning rate: 0.038

- learning rate decay: 1.2e-4

- momentum: 0.92

- dropout: 0.

rounded to two digits. We use this as seed_configuration in the Local Search.

We set the perturbation_factors as (0.9, 1.1). The algorithm will

multiply one parameter by 0.9 or 1.1 at a time and see if these local

changes can improve performance. If all changes have been tried and none improves

on the seed configuration the algorithm stops. The example can be run as

cd sherpa/examples/mnistmlp/

python runner.py --algorithm LocalSearch

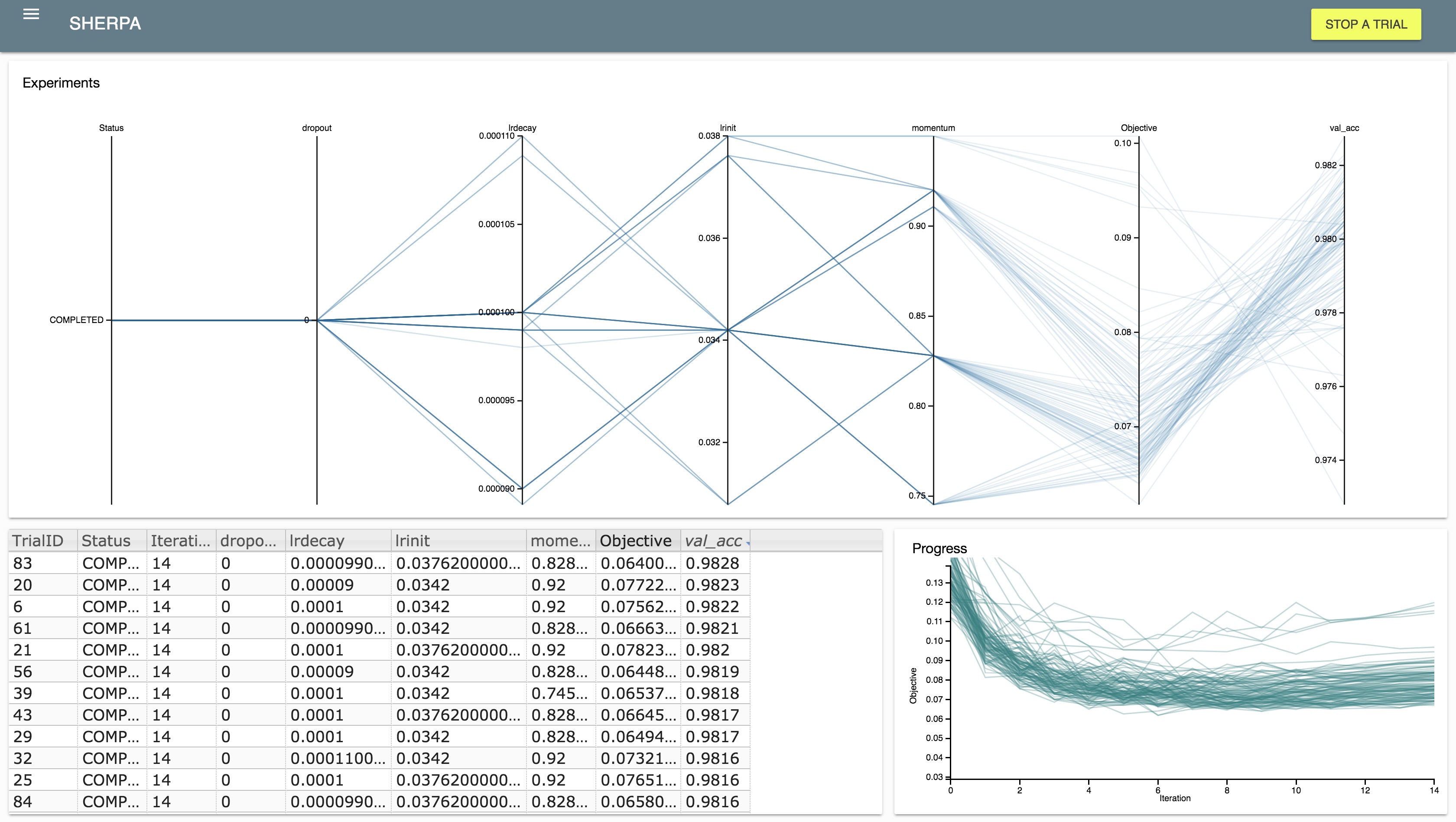

After running, we can inspect the results in the dashboard:

We find that fluctuations in performance due to random initialization are larger than small changes to the hyperparameters.